RAG vs Finetuning - Your Best Approach to Boost LLM Application.

By A Mystery Man Writer

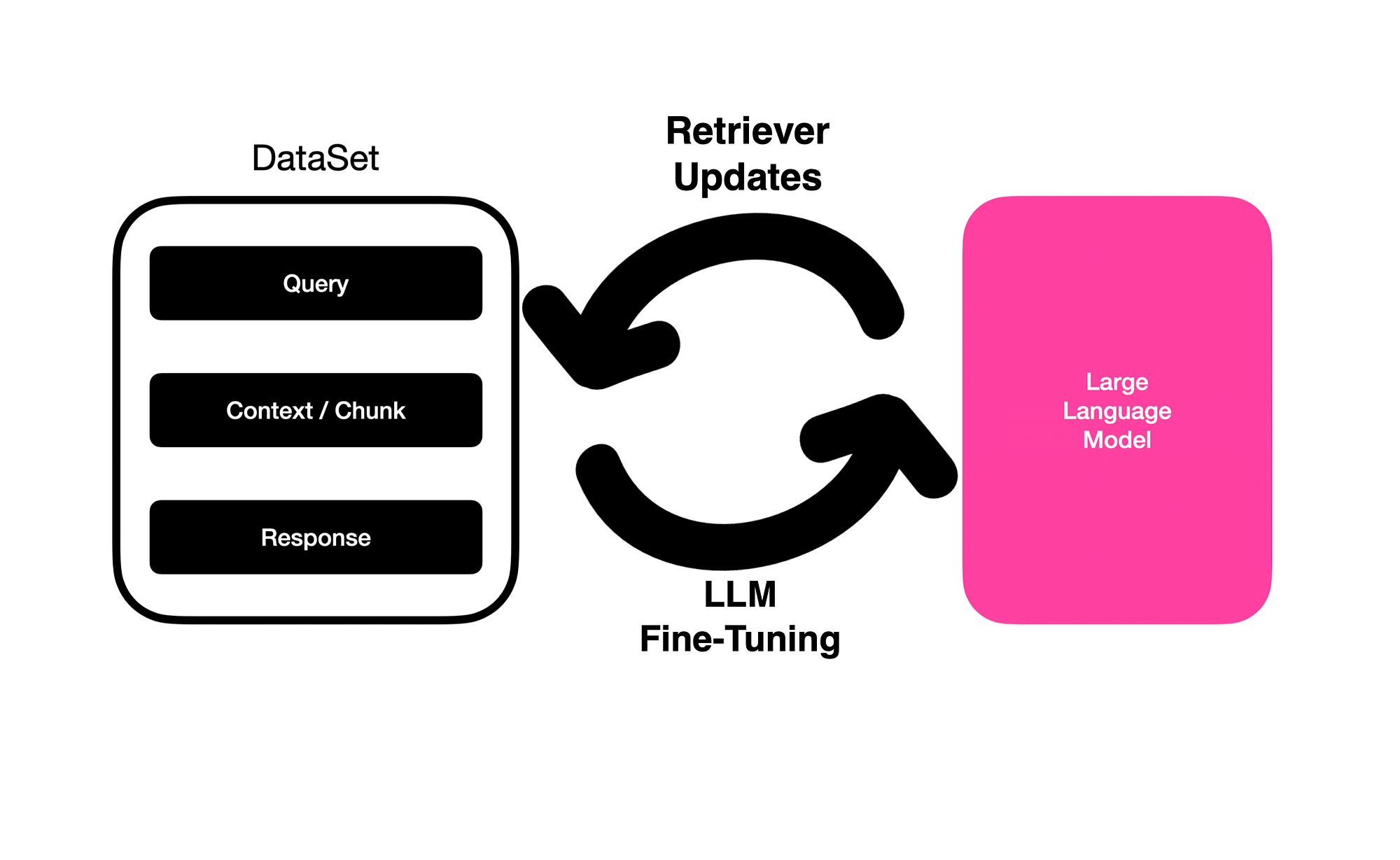

There are two main approaches to improving the performance of large language models (LLMs) on specific tasks: finetuning and retrieval-based generation. Finetuning involves updating the weights of an LLM that has been pre-trained on a large corpus of text and code.

How to develop a Enterprise grade LLM Model & Build a LLM Application

Issue 13: LLM Benchmarking

Issue 24: The Algorithms behind the magic

Controlling Packets on the Wire: Moving from Strength to Domination

What is RAG? A simple python code with RAG like approach

Real-World AI: LLM Tokenization - Chunking, not Clunking

What is RAG? A simple python code with RAG like approach

Importance Of Document Processing Solutions And Tools In Business

Breaking Barriers: How RAG Elevates Language Model Proficiency

Real-World AI: LLM Tokenization - Chunking, not Clunking

Issue 24: The Algorithms behind the magic

Real-World AI: LLM Tokenization - Chunking, not Clunking

The Power of Embeddings in SEO 🚀

- Knickers Women Shorts Knickers Full Briefs Womens Cycling Shorts Multipack Short Womens Jackets Ladies Black Panties B : : Fashion

- Gravity-Defying 1,000-Pound Steel Table - by Paul Cocksedge - Art

- Prima Donna Livonia Half Padded Plunge Bra 0163432 – Anna Bella

- BEST OF RUNNING Compressport R2 OXYGEN - Calf Sleeves - white - Private Sport Shop

- 2Pcs Daily Comfort Wireless Shaper Bra,No Underwire Sports Bras